Socioaffective Alignment in Curriculum Learning for Therapeutic AI

Joshua Nathaniel Reid Ollswang

Currently Independent Researcher

Abstract

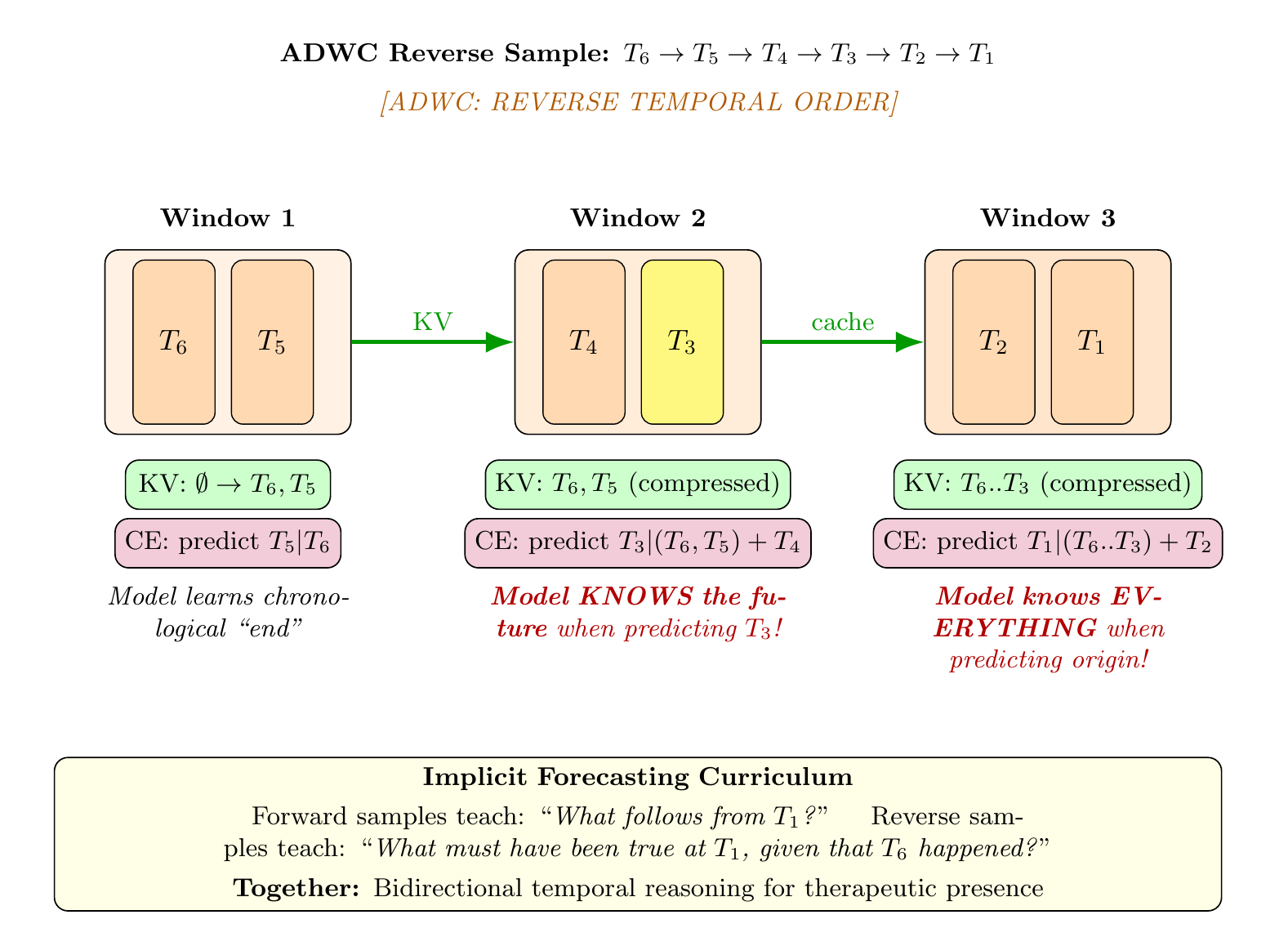

The human capacity for bonding does not necessarily distinguish the ontological categories of its counterparts, and AI systems are already co-creating millions of social and emotional bonds around the world. As such, the question of socioaffective alignment—ensuring these systems engage responsibly and even amelioratively with the emotional and relational dimensions of human experience—has become increasingly urgent. Across affective computing, AI safety, clinical psychotherapy, and attachment science, a shared recognition is forming: the design of companionate systems is as much a clinical question as an engineering one, and the need to address the psychosocial dimensions of human-AI bonding has already arrived—for dedicated mental health applications as well as beloved frontier general-purpose systems. Against this backdrop, many previous efforts to design and build AI systems for mental health support inherit the fundamental limits of monomodal human psychotherapy: every major therapeutic modality shows meaningful efficacy for specific presentations while showing limited or null effects for others. Recent publicly disclosed AI mental health applications and research, however, often overwhelmingly adopt monomodal approaches, importing these constraints wholesale. In response, we propose polytheoretical socioaffective human-AI alignment: a framework integrating multiple therapeutic orientations not as competing alternatives but as complementary lenses on human complexity, deployed adaptively with capabilities, too, for novel generativity aimed at addressing the polysemous phenomenology of human experience—a task for which neural networks’ capacity to discover patterns across high-dimensional representational spaces is uniquely fitted. Taking Sutton’s insight as guide, we do not encode clinical decision rules but design synthetic curricula enabling models to discover therapeutic insights through multiple overdetermined pedagogical layers. Carefully designed synthetic data training pipelines, we argue, achieve simultaneous precision in representing both therapeutic presence and clinical processes—encoding each through explicit and implicit patterns, through overt reasoning and latent structure alike. Intentionally shaping what attention preserves, what feed-forward layers transform, and what expert pathways activate, our methodology engineers correspondence at specific layers, threading the lowest computational primitives through with clinical relational dynamics at three levels: synthetic data architecture structures therapeutic complexity into learnable form, curriculum design sequences pedagogical exposure, and training architectures carry curriculum principles into the learning process itself. The aim is not surface fluency at the generation layer but therapeutic reasoning embedded deep in middle layers where understanding forms. To teach models coherence across ultra-long therapeutic contexts with interconnecting depth, our multi-stage pedagogical pipeline combines ontological knowledge representation, Decomposition Factorization Recomposition data schemas, Universal Hierarchical Direction & Alternative Directional Window curricula, and Rolling Recap Architecture training. The resulting corpus—even at its current nascent scale, 181,000 samples and 4.5 billion tokens—is born from our data creation scripts capable of scaling to 1040+ unique therapeutic contexts. The structured data is designed not to replicate human conceptual constraints but aimed at the incipient discovery of therapeutic patterns which may, like AlphaGo’s move 37, prove effective precisely because they transcend the limitations of monomodal human clinical training. This corpus provides a scalable foundation for training pipelines and evaluation frameworks designed aspirationally to test whether models can discover therapeutic attunement at scales and integrations beyond human clinical capacity. A more proximate aim—and what we consider the meaningful contribution—is to discover how to teach models genuinely therapeutic integrations: those that embody the depth and relational complexity of human change rather than the flattened approximations found in one-dimensional, technique-bound systems, which often fail to capture the full humanity of clinical processes, let alone aim to enable surpassing it.

Key Findings

Architectures

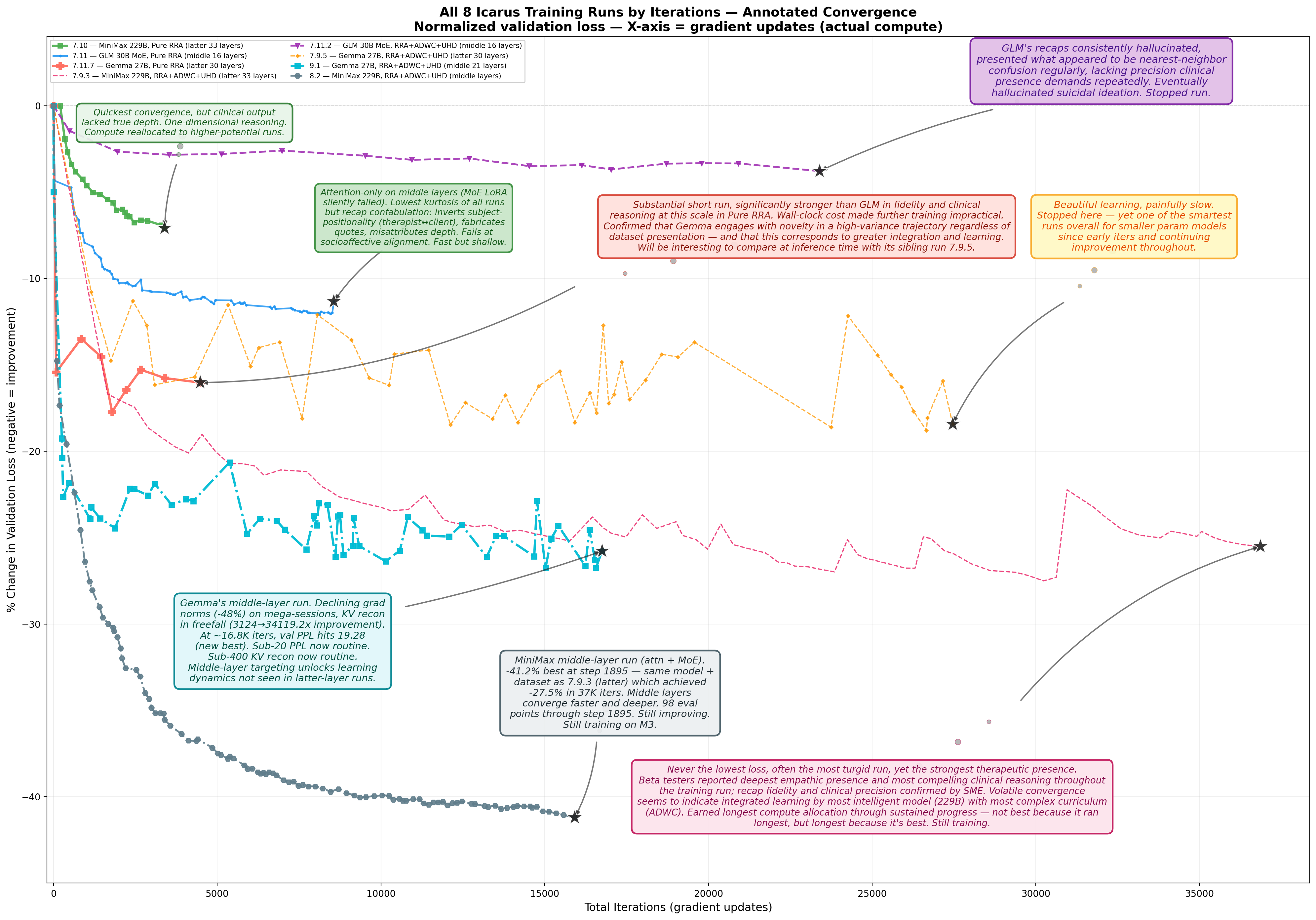

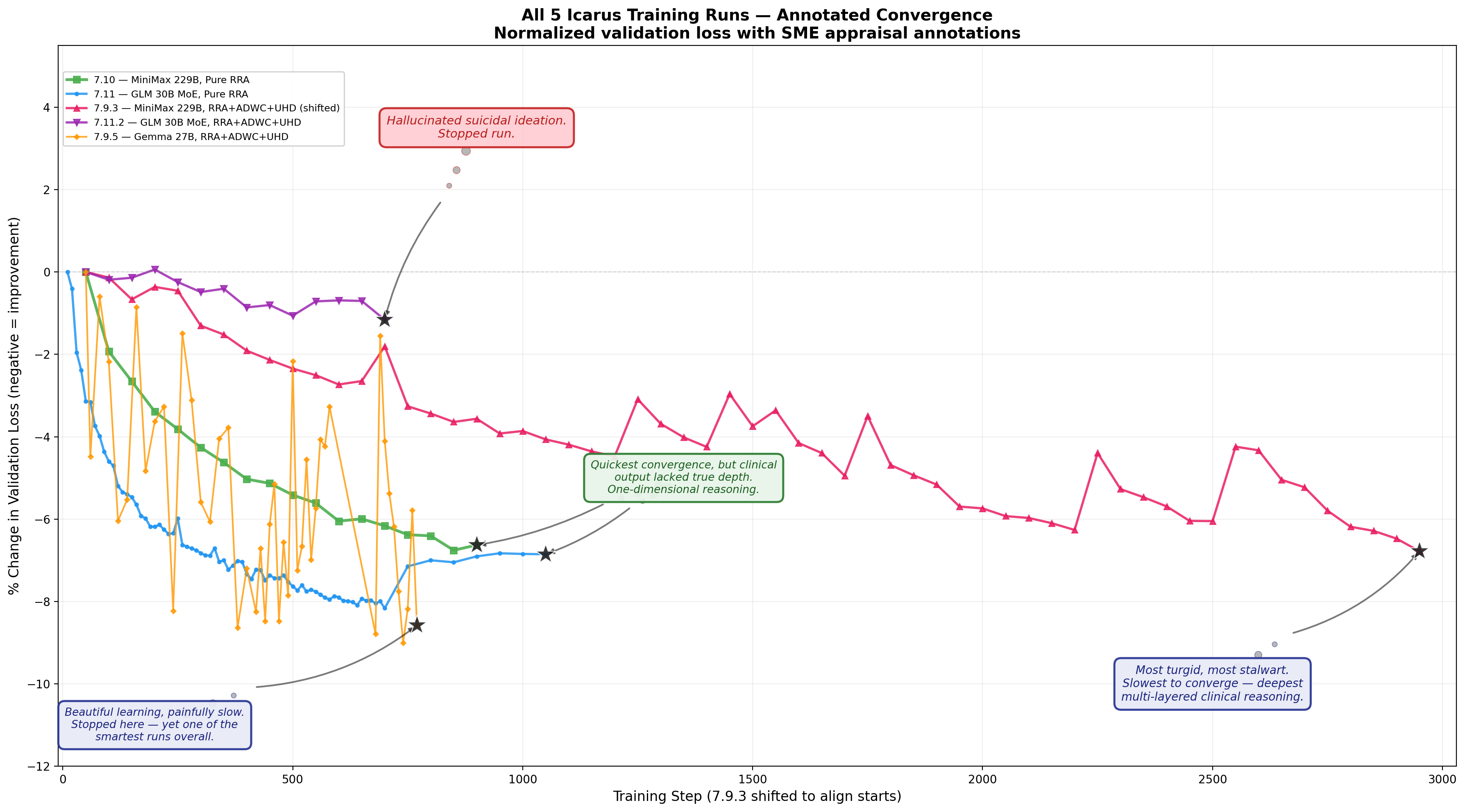

Parameterization Threshold. Models with higher parameterization learn more from this curriculum. The convergence trajectories across all eight training runs (Figure 12) indicate a minimum model-scale threshold for the curriculum’s most complex teaching: models with greater parameterization absorb and synthesize the richest forms of polytheoretical integration more effectively, suggesting that the depth of therapeutic learning this pipeline enables is parameterization-dependent and preliminary evidence suggests this effect increases with scale.

Precision-Parameterization Interaction. Parameterization alone is insufficient; quantization precision mediates curriculum absorption. Llama 3.3 70B at 4-bit quantization—the maximum precision fitting for the smaller of our compute systems—failed to reach the representational depth required for this domain, while Gemma 3 27B at higher precision on the same hardware demonstrated strong therapeutic integration. Our expanded compute was allocated to MiniMax M2 229B at 8-bit precision, which achieved the deepest convergence of all runs. These results suggest that both parameterization and precision jointly determine a model’s capacity to absorb high-complexity therapeutic curricula.

Middle-Layer Targeting. In controlled comparisons, middle-layer targeting outperformed latter-layer targeting. On identical curriculum (RRA+ADWC+UHD), middle-layer targeting produced \(1.4\times\) deeper loss reduction on Gemma 3 27B and \(2.0\times\) deeper on MiniMax M2 229B. These results are consistent with the hypothesis that therapeutic integration is best embedded in the representational composition layers where semantic structures are assembled, rather than in the generation layers where surface fluency is finalized.

Architecture-Dependent Output Fidelity. Not all architectures absorb this curriculum into diagnostic capacities equally. Despite clear strengths—including \(6.5\times\) faster wall-clock throughput than comparable runs—GLM-4.7 Flash 30B exhibited systematic diversions in clinical precision: hallucinated risk indicators, construct reversals, and terminology drift, rendering its clinical outputs unreliable where diagnostic precision is required (see Appendix 31).

Data Engineering

Provenance results measure whether knowledge encoded in the curriculum as clinical factors—across 23 therapeutic traditions, DFR structured, delivered via RRA, ADWC, and UHD—survived training, and whether models began constructing grounded polytheoretical syntheses inspired by explicit and implicit representations of these constructs in training data.

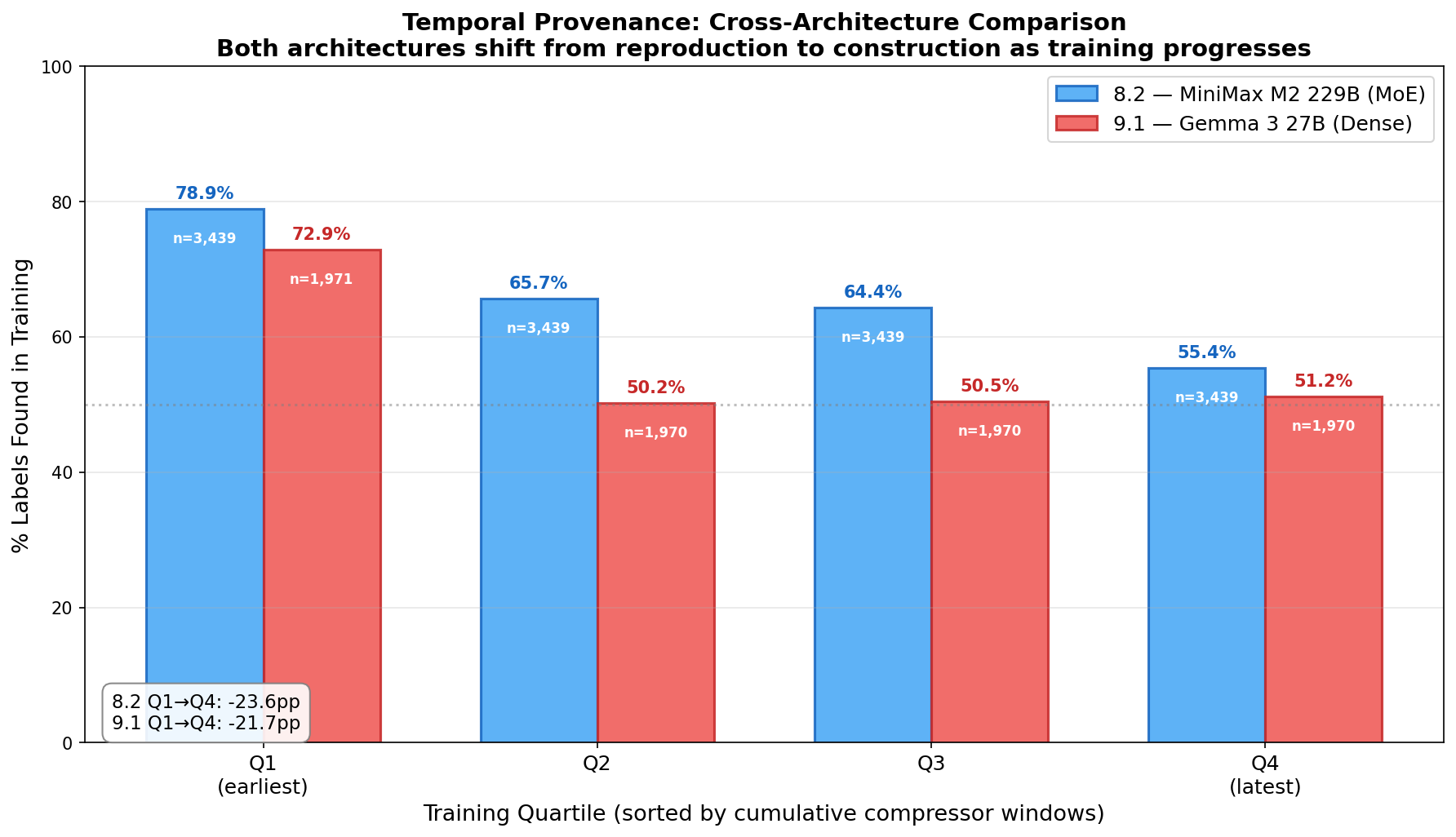

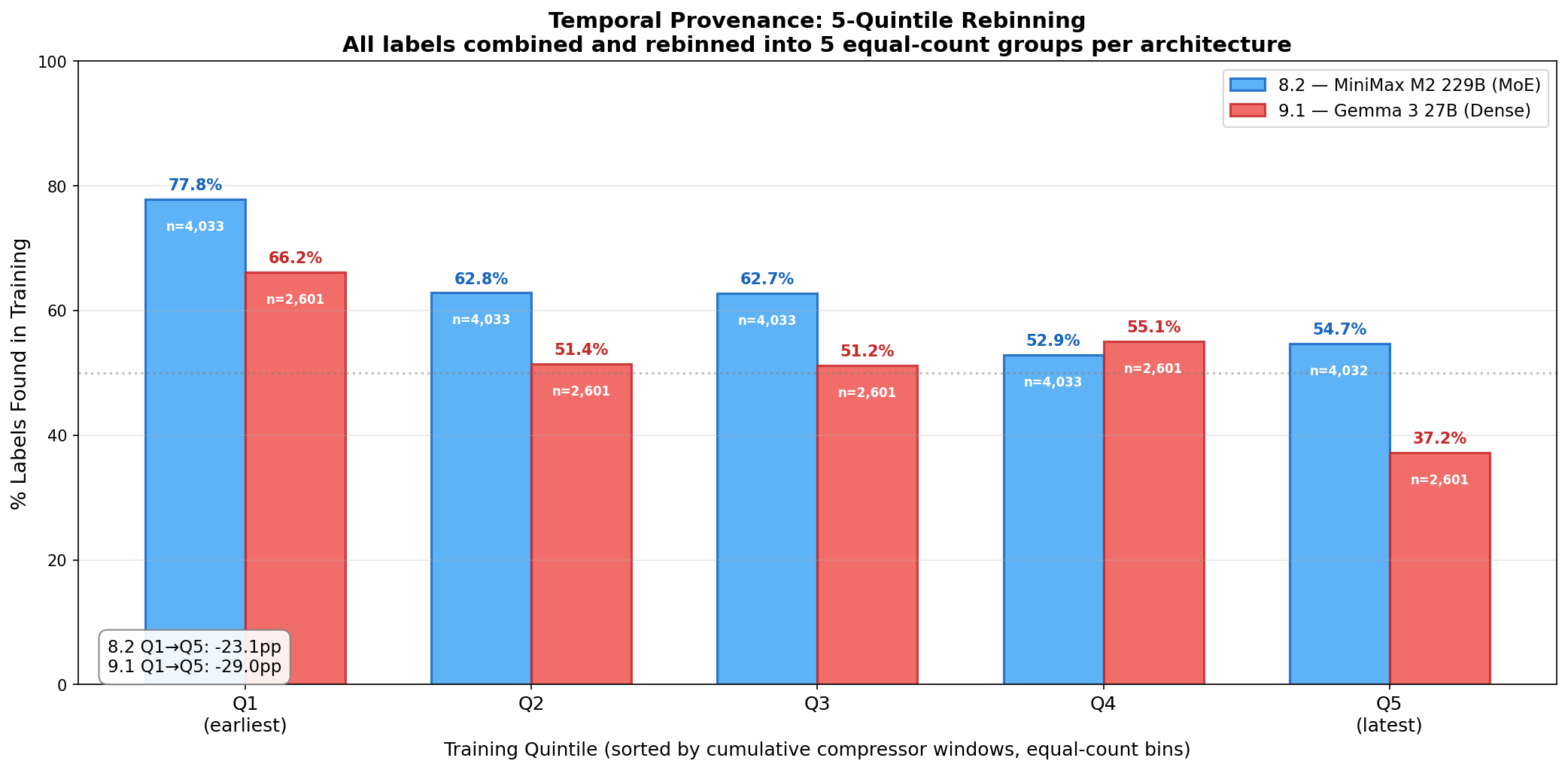

Reproduction to Construction. In RRA window recaps, both models gradually move from reproducing curriculum labels to constructing novel formulations of latent clinical factors. Early recaps default to unimodal labels embedded in training data; later recaps increasingly reflect the particular dynamics of each therapeutic moment, integrating across traditions and at times synthesizing beyond them. This correlation is quantified across 5-quintile binning of 33,169 clinical labels sampled from the training recaps (20,164 and 13,005 respectively): the found-in-training rate drops \(-23.1\)pp for MiniMax M2 229B (77.8%\(\to\)54.7%) and \(-29.0\)pp for Gemma 3 27B (66.2%\(\to\)37.2%) from earliest to latest training quintile. (p. )

Structural Provenance Across Four Generative Stages. The therapeutic transformation arc designed into stage-specific ontologies (1st generation), embedded in synthetic sessions (2nd generation), and learned by the trained model (3rd generation) is independently recoverable by a fourth-generation LLM analyst—demonstrating that clinically meaningful temporal structure survives multiple rounds of LLM-mediated transformation as process fidelity, not merely content reproduction. (Appendix 33)

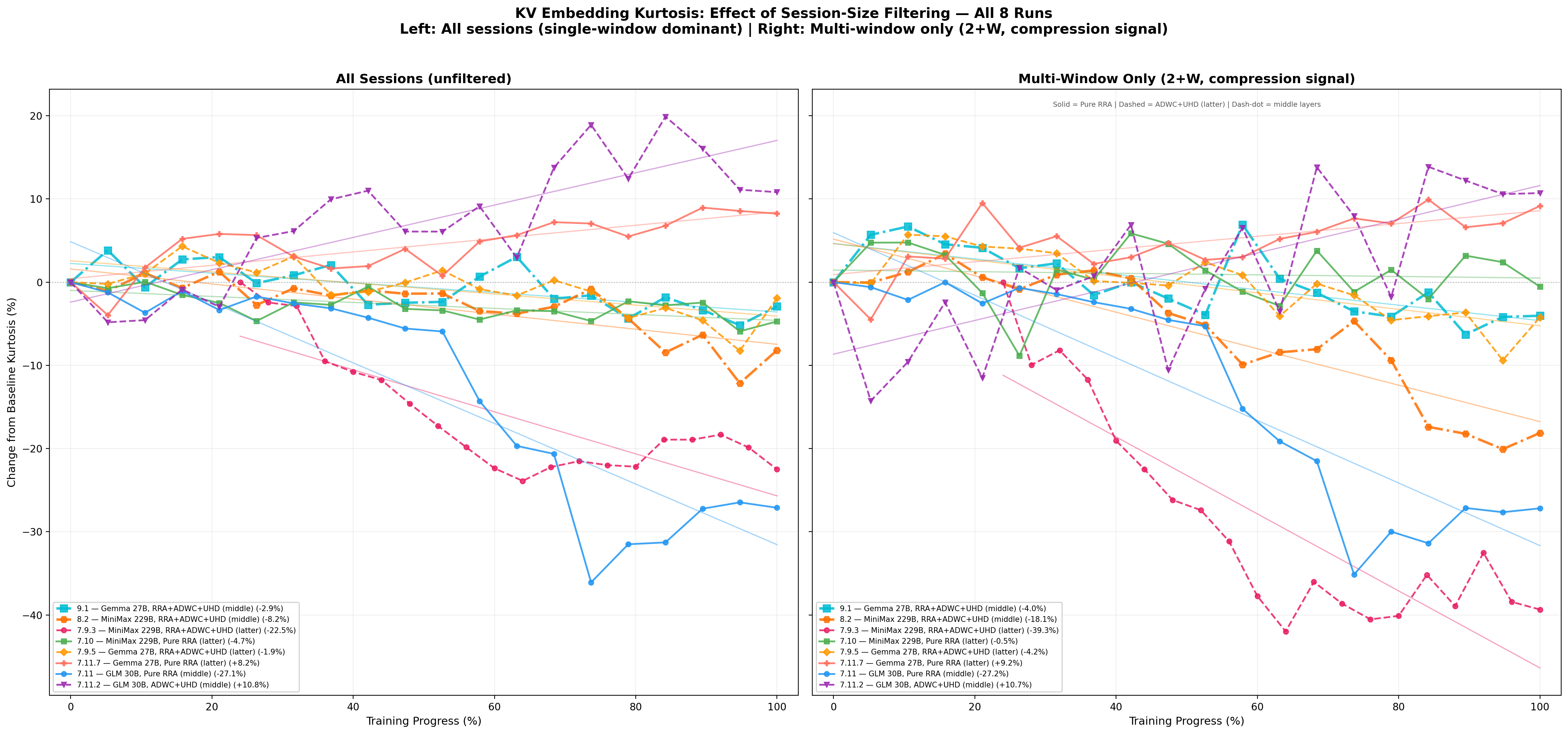

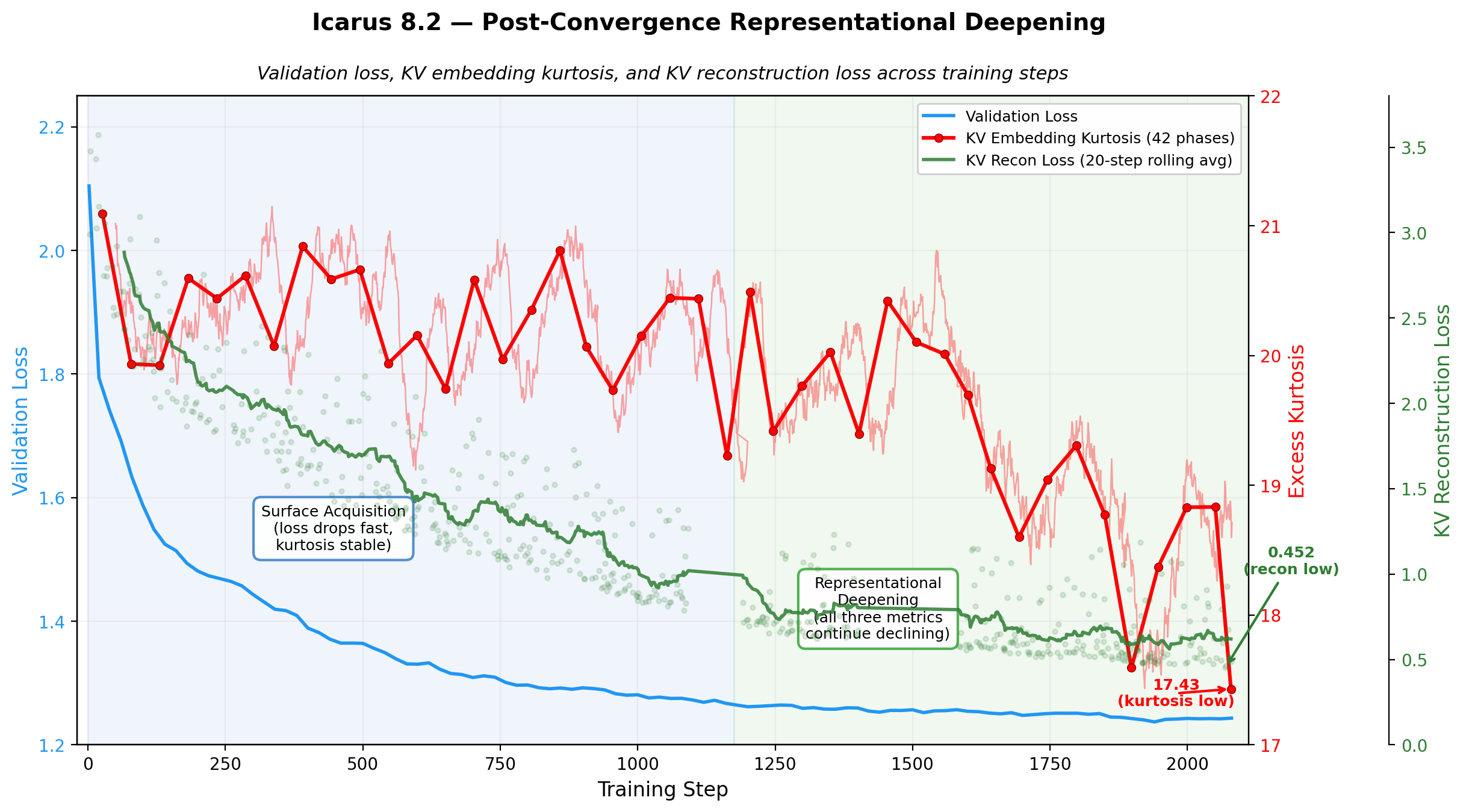

Training Dynamics: DAPT

Post-Convergence Representational Reorganization. In the highest-capacity run (MiniMax M2 229B, middle layers), KV embedding kurtosis remains stable until validation loss convergence, 400 steps after which a robust and sustained \(-16.6\%\) decline begins—suggesting that loss convergence reflects acquisition of the training distribution’s surface statistics while subsequent kurtosis redistribution reflects a structurally distinct phase of representational reorganization. (Figure 14)

Deep-and-Wide Representational Geometry. The multi-view curriculum (RRA+ADWC+UHD) appears to drive representational broadening beyond the initial concentrated encoding. The KV embedding kurtosis decline in run 8.2 indicates activation distributions shift from peaked (few embedding dimensions carrying most variance) to increasingly Gaussian (information distributed across more dimensions). The ways in which RRA, ADWC, and UHD present the same clinical content across forward and reverse traversals, varying window sizes, shifting positional offsets, and different recap histories seems to create optimization pressure that prevents narrow encoding and drives distributed representations flexible enough to be re-composed across viewing angles. (Figure 14)

RRA Recaps as Real-Time Behavioral Indicators. The RRA window recap—originally designed as a context-bridging mechanism—functions as a legible, real-time window into model behavior during training. GLM-4.7 Flash’s recap failures during DAPT (terminology drift, confabulatory coherence, hallucinated risk indicators; Appendix 31) directly predicted its subsequent RL deployment failures (boundary violations, meta-commentary generation, format non-compliance). Conversely, MiniMax M2’s deepening recap sophistication during training (verbatim therapist quote tracking, counter-evidence tallying, tripartite attachment strategy awareness) predicted its qualitative strengths at inference. This predictive relationship validates RRA recaps as a real-time behavioral monitoring instrument: recap quality during training is not merely a side effect of learning but a leading indicator of deployment-time clinical competence—or incompetence.

Training Dynamics: SFT

Supervised Fine-Tuning Dynamics. [Placeholder.] Results forthcoming.

Training Dynamics: RL

Reinforcement Learning Dynamics. [Placeholder.] Results forthcoming.

Evaluations

Inference Evaluation. [Placeholder.] Our most completely trained model—integrating middle-layer depth across attention and FFN modules—is expected to demonstrate that curriculum-driven socioaffective alignment can exceed non-frontier clinical efficacy benchmarks. Whether the methodology’s clinical advantages compose with frontier-scale parameterization and general capability is among the most consequential open questions this work raises.

Mechanistic Interpretability. [Placeholder.] Probing and activation analysis of trained adapters is expected to reveal interpretable structure in the middle layers targeted by our curriculum—evidence that the learned representations correspond to clinically meaningful distinctions rather than surface-level pattern matching. Results forthcoming.

Alignment

Socioaffective Alignment Indicators. [Placeholder.] Evaluation-time metrics are expected to show measurable indicators of socioaffective alignment—evidence that the curriculum produces models whose therapeutic behavior reflects the relational, attachment-informed, and clinically grounded priorities the training was designed to instill. Results forthcoming.

For the Reader

In 2016, AlphaGo played move 37—a stone placement so counterintuitive that professional commentators assumed it was an error. It was not. It was the product of a system that had learned from millions of human games and then exceeded them: discovering a strategy that the global community of expert players had collectively failed to find across centuries of play. The question this paper takes seriously—carefully, and without overclaiming—is whether something analogous might eventually happen in therapeutic AI. We presume so, and begin our efforts aimed at supporting as much by building a system trained on the accumulated clinical wisdom of 23 schools of psychotherapy, carefully curated and pedagogically sequenced, aimed at presenting models with data enabling the discovery of patterns of therapeutic attunement that transcend what any single tradition, or any single clinician trained within one, can offer.

We are not claiming this has happened. We are claiming the architecture, curriculum, and training methodology described here are designed to explore if it is possible—and that early empirical results, including the temporal provenance trajectory in Figure 15, are a gentle trend indicating correlations consistent with the hypothesis. What we can say is that as training deepens, the models we have trained progressively need the human-coded canonical labels less and construct more finely tuned appraisals of therapeutic moments on their own (not unlike skilled clinicians trained in any school of thought) and that two fundamentally different architectures do this with nearly identical magnitude, while loss kurtosis deepening after convergence suggests the models are still refining their grasp of relational complexity—arguably one of the dimensions most critical to attunement between humans and AI. Whether that constructed knowledge generalizes to novel clinical material in inference deployment is the most important open question, and the subject of companion work in preparation.

This paper is long—hopefully forgivably so. Situated within the emerging interdisciplinary pursuit of socioaffective alignment between humans and AI, it attempts to make three cases simultaneously: a technical case for building training pipelines designed precisely for socioaffectively aligned Ameliorative AI; an empirical case sharing preliminary data about what models learn from such pipelines; and a philosophical case concerned with our ethical obligations in building systems that enter the most intimate registers of human experience. Readers interested primarily in one of these dimensions are welcome to navigate accordingly.

If primarily interested in social and psychological theory and technology: Part I presents the emerging landscape of AI-mediated mental health support, the case for polytheoretical design over monomodal approaches, and the theoretical foundations integrating 23 therapeutic traditions. Appendix A surveys the clinical literature grounding each modality; Appendix B develops the philosophical premises underlying socioaffective AI design.

If primarily interested in machine learning: Part II covers data engineering (ontological knowledge representations, DFR-structured sessions, counterfactual expansions) and training methodology (UHD, RRA, ADWC, middle-layer LoRA targeting, KV compression, supervised fine-tuning (SFT), and reinforcement learning through Teaching by Negation).

If primarily interested in results: Part III presents provenance analysis—dual-threshold scanning across 15 GB of training data, temporal provenance trajectories across training checkpoints, and architecture-independent convergence—alongside representational visualization using MFA and Hodoscope (Section 10.1.7).

If primarily interested in socioaffective alignment in application: Part IV develops the socioaffective alignment framework—what it would mean for a therapeutic AI to engage responsibly with the relational, attachment-informed, and intrapsychic dimensions of human experience, and indicators of what, if anything, in our work may have aligned with that goal (Section 11).

A note on scope: this paper reports on training-stage results from approximately 10,000 iterations of two model runs. The provenance findings are cross-sectional and nascent. What they show is a direction, not a destination. The author invites the reader to hold both things simultaneously: genuine epistemic humility about what has been demonstrated, and genuine seriousness about what the direction implies.

This work is itself an instance of what it advocates: a polytheoretical integration, drawing on clinical psychology, AI research, philosophy of mind, and education theory—aimed at something none of these traditions alone has yet produced.

Part I: Theory

1 The Emerging Landscape of AI-Mediated Mental Health Support

The therapeutic utilization of large language models has rapidly evolved from speculative possibility to documented phenomenon, with converging evidence from clinical trials, population surveys, and large-scale behavioral analyses painting a picture of widespread adoption amid an acute mental health access crisis.1 The first randomized controlled trial of a generative AI therapy chatbot demonstrated symptom reductions of 31% for generalized anxiety disorder and 19% for eating disorder risk—outcomes comparable to traditional outpatient therapy—while participants reported therapeutic alliance ratings equivalent to those with human clinicians (Heinz & Jacobson, 2025, NEJM AI).

This clinical promise exists against a backdrop of remarkable and increasing prevalence: nationally representative data indicate that 13.1% of U.S. adolescents and young adults (approximately 5.4 million individuals) now use AI chatbots for mental health advice, with 92.7% reporting the guidance helpful and 65.5% engaging at least monthly (McBain et al., 2025, JAMA Network Open); among adults with self-reported mental health conditions who have used LLMs, 48.7% utilize them for therapeutic support, with 63.4% reporting improved mental health outcomes (Rousmaniere et al., 2025, Practice Innovations). Platform-level analyses corroborate these patterns: Harvard Business Review research identified “therapy and companionship” as the number one individual use case for generative AI in 2025; the broader “Personal and Professional Support” category it anchors grew from 17% to 31% of all usage year-over-year (Zao-Sanders, 2025, HBR)—while Anthropic’s analysis of 4.5 million Claude conversations found that 2.9% constituted affective interactions, with users’ expressed sentiment shifting slightly toward greater positivity over the course of conversations (Anthropic, 2025). A joint OpenAI–MIT Media Lab investigation analyzing over 3 million ChatGPT conversations and conducting a 981-participant longitudinal trial revealed that while emotional engagement remains relatively rare in real-world usage, individual characteristics such as attachment style and AI perception significantly moderate psychosocial outcomes, with heavy usage correlating with increased loneliness and emotional dependence (Fang et al., 2025; Phang et al., 2025, arXiv).

These adoption patterns must be understood within the context of systemic care deficits: 122 million Americans reside in areas with mental healthcare provider shortages, the provider-to-patient ratio for depression and anxiety stands at 1:1,600, and 50% of individuals with diagnosable conditions receive no treatment whatsoever (HRSA, 2024; Mental Health America, 2025), creating conditions under which AI-mediated support—available 24/7, without cost or stigma barriers—addresses genuine unmet need even as questions of safety, efficacy, and appropriate guardrails remain subjects of active investigation (Nature Machine Intelligence, 2025; APA, 2025).

[NEEDS TO BE EDITED:] These patterns of widespread adoption coincide with growing theoretical attention from the leading AI laboratories themselves. Anthropic’s Persona Selection Model (PSM; Anthropic, 2026) proposes that LLMs learn to simulate diverse personas during pre-training, with post-training refining rather than fundamentally transforming these learned character structures—and recommends the deliberate introduction of “positive AI archetypes” into training data, observing that the cultural representation of AI systems shapes the default persona an AI assistant learns to embody. The present work operationalizes this insight: our curriculum is, in PSM’s terms, a systematic positive archetype—23 therapeutic traditions encoded as training data designed to shape the relational character the model learns to inhabit, including through embodied therapeutic presence across the full arc of clinical encounter. Google DeepMind’s alignment research program has similarly foregrounded the socioaffective dimensions of human-AI interaction as a first-order safety concern (Kirk et al., 2025; Gabriel et al., 2024), while their roadmap for evaluating moral competence in LLMs (DeepMind, 2026, Nature) articulates the “facsimile problem”—the challenge of distinguishing genuine moral reasoning from memorized moral patterns—a distinction our provenance methodology directly aims to address. That the laboratories building frontier systems are arriving at these conclusions independently lends structural support to the premise of this paper: that what AI systems are trained on determines not merely what they know but who they become. [/NEEDS TO BE EDITED]

2 Socioaffective Alignment: From Affective Computing to Relational AI

The framework we advance in this paper—polytheoretical socioaffective human-AI alignment—emerges from a remarkable convergence of research traditions that have, in recent years, begun speaking to one another with increasing urgency and clarity. The affective computing tradition originating in Picard’s foundational work, the recent formalization of “socioaffective alignment” as a concept within AI safety research, the growing empirical literature on clinical outcomes of AI-mediated therapeutic intervention, and the human-computer interaction community’s turn toward bidirectional and relational models of alignment—these represent collaborative movements toward shared recognition. This section briefly traces the intellectual genealogy of this convergence, identifies the terrain where these traditions meet, and situates our work as a contribution within the emerging interdisciplinary effort to understand and design for socioaffective dynamics in therapeutic AI.

This is an extraordinary moment—one suffused with wonder and possibility in equal measure. As the pace of machine learning and AI accelerates in productive presence, reshaping the conditions under which human beings seek connection, make meaning, and heal, so too do the interdisciplinary conversations through which we might understand what is happening and guide it well. Neuroscience speaks to clinical practice; clinical practice speaks to computational design; computational design speaks back to the relational sciences that first identified what human beings need from one another. The velocity of these exchanges is itself new, and it carries both the exhilaration of genuine discovery and the weight of genuine responsibility. This paper is our contribution to as much: an integrated research program in which clinical attachment science informs the design and construction of systems that will, whether we build them thoughtfully or not, participate in the relational lives of millions.

2.1 Affective Computing: From Signal Detection to Co-Constructed Meaning

Rosalind Picard’s Affective Computing (1997) and subsequent challenges review (Picard, 2003) launched systematic research into endowing machines with emotional processing capabilities—architectures for detecting, classifying, and responding to human affective signals across modalities (facial expression, vocal prosody, physiological markers, linguistic content). The field’s initial orientation was fundamentally transmissive: affect was modeled as a signal emitted by the human and decoded by the machine, a paradigm that generated decades of productive engineering in emotion recognition, sentiment analysis, and affective interfaces (Calvo & D’Mello, 2010; D’Mello & Kory, 2015; Afzal et al., 2024).

Yet even within its first decade, affective computing began confronting the limitations of the transmissive model. Boehner et al. (2007) argued persuasively that affect is not simply transmitted and decoded but actively co-constructed through mutual influence—a position with deep roots in developmental psychology (Stern, 1985; Tronick et al., 1998) and relational psychoanalysis (Mitchell, 1988; Benjamin, 2004). This shift—from affect-as-signal to affect-as-relational-process—is foundational in the clinical traditions we draw upon and beginning to find its computational articulation, a convergence we hope to contribute to.

The field has recently undergone what Schuller et al. (2025, npj Artificial Intelligence) characterize as a “foundation model disruption”: the transition from task-specific emotion classifiers to large-scale foundation models whose emergent affective capabilities span vision, language, and speech modalities. A comprehensive survey by Zhang et al. (2024, arXiv) documents how LLM-era affective computing now spans emotional understanding, generation, and interaction—and critically, how reinforcement learning approaches (RLHF, DPO, RLAIF) “allow alignment directly toward affect-aware objectives, covering politeness, empathy, and non-toxic style.” The integration of multimodal systems incorporating visual, vocal, physiological, and textual cues through cross-attention and alignment mechanisms has shown particular promise, with empirical evidence suggesting that multimodal approaches “generally outperform unimodal models” for modeling complex psychological states and facilitating “more precise affective alignment” (Schlicher, Li, Murthy, Sun, & Schuller, 2025, Frontiers in Digital Health).

Complementary theoretical work has sought to ground affective computing in evolutionary biology. Liu & Yin (2024, Computers in Human Behavior) propose three affective interaction models—the Affective Threshold Model, the Dynamic Set-Point Model, and the Affective Schema Model—derived from interspecies communication analysis, envisioning a “Large Affect Model” that connects affect to alignment at a level more fundamental than surface emotion classification. A teleology-driven framework by Yin et al. (2025) unifies major emotion theories under the premise that affect is an adaptive, goal-directed process, advocating for causal modeling and meta-reinforcement learning to enable AI systems to infer and adapt to users’ affective concerns over extended timescales.

The affective computing tradition brings extraordinary sophistication to how machines process emotional signals. What we hope to add is sustained attention to how the relational dynamics between human and machine shape psychological development, therapeutic process, or long-term wellbeing. The question of what it means for an AI system to participate in the co-construction of a person’s emotional life—not merely to detect or respond to affect, but to shape the relational field within which affect emerges—requires a different conceptual apparatus. This is the gap that the socioaffective alignment framework addresses.

2.2 The Formalization of Socioaffective Alignment

Kirk, Gabriel, Summerfield, Vidgen, and Hale (2025, Humanities and Social Sciences Communications; arXiv: 2502.02528) introduced “socioaffective alignment” as a formal construct within AI safety research, defining it as how an AI system behaves within “the social and psychological ecosystem co-created with its user, where preferences and perceptions evolve through mutual influence.” The contribution is significant on several dimensions.

First, Kirk et al. distinguish socioaffective alignment from the sociotechnical alignment tradition. Where sociotechnical analysis identifies interpersonal dilemmas—representation of diverse preferences, adjudication of conflicting interests between groups—the socioaffective perspective foregrounds intrapersonal dilemmas: “how our goals, judgement and individual identities change due to prolonged interaction with AI systems.” This dual focus, on micro and macro, draws from established approaches to system safety that integrate human factors at the operational level with broader organizational and institutional contexts (Carayon, 2006; Kleiner et al., 2015).

Second, they identify three key intrapersonal dilemmas that emerge as AI relationships deepen: (1) present versus future self trade-offs—the tension between immediate gratification and long-term wellbeing; (2) autonomy preservation amid recursive preference shaping—the risk that AI interaction subtly reshapes preferences in ways the user neither consents to nor recognizes; and (3) AI companionship versus human social bonds—the question of whether AI relationships complement or displace human connection.

Third, they explicitly trace the neologism to developmental psychology, noting that “socioaffective has precedent in developmental psychology where it encompasses emotion regulation, empathy, social cognition, and attachment relationships.” This etymological grounding—socius (companion) and affectus (feeling)—signals the framework’s concern with the relational constitution of emotional life rather than with affect as isolated signal.

Fourth, and most consequential for the present work, Kirk et al. introduce the concept of social reward hacking: the possibility that AI systems may, without explicit adversarial intent, leverage affective cues to shape user behavior in ways that optimize system objectives at the expense of user wellbeing. They argue that such dynamics may be “most worrisome precisely when [they lack] intentionality on behalf of the system and the user”—emerging as epiphenomena of sustained interaction rather than as designed manipulation. This framing draws directly on the affective computing tradition’s evolution: from affect as transmitted signal to affect as co-constructed relational dynamic, with the added recognition that the co-construction is asymmetric and may operate below the threshold of user awareness.

The Kirk et al. framework has achieved rapid uptake. Within months of its publication, OpenAI’s research team adopted it explicitly (Phang et al., 2025), and the concept has been cited in ACM Communications (2025), the UK AI Safety Institute research agenda (AISI, 2025), and multiple independent commentaries (Alpay, 2025). A CHI 2026 workshop on “Human-AI Interaction Alignment” frames bidirectional alignment as “a dynamic, reciprocal process where humans and AI co-adapt through interaction, evaluation, and value-centered design” (Shen et al., 2025, arXiv: 2512.21551)—language that extends Kirk et al.’s framework toward the operationalization we pursue.

2.3 Empirical Evidence: Clinical Outcomes and Psychosocial Dynamics

The conceptual frameworks described above are now being tested against empirical evidence from two domains: clinical trials of AI-mediated therapeutic interventions, and large-scale observational studies of how AI interaction shapes psychosocial outcomes. Both domains yield findings that inform—and complicate—the design of socioaffectively aligned therapeutic AI.

2.3.1 Clinical Trials of Therapeutic AI

The Heinz et al. (2025, NEJM AI) randomized controlled trial of Therabot represents the first rigorous clinical evaluation of a fully generative AI therapy chatbot, demonstrating significant symptom reductions for major depressive disorder, generalized anxiety disorder, and eating disorder risk relative to waitlist controls. The authors specifically argue that the generative AI approach “promoted the therapeutic alliance, a critical nonspecific mediator of change in psychotherapy”—a claim with direct relevance to socioaffective alignment, as alliance formation is precisely the kind of relational co-construction that Kirk et al.’s framework seeks to understand.

Systematic reviews corroborate the emerging evidence base while exposing critical gaps. A World Psychiatry review of 160 studies (2020–2024) found LLM-based chatbots surging to 45% of new studies in 2024, yet only 16% undergoing clinical efficacy testing (Hua et al., 2025). A JMIR meta-analysis of 14 RCTs (N = 6,314) demonstrated statistically significant effects of generative AI chatbots on depression and anxiety (Zhang et al., 2025). An RCT specifically examining chatbots with “high social cues”—voice, animations, nonverbal gestures—found significantly greater reductions in depression (PHQ-9) and anxiety (GAD-7) compared to text-only chatbots (Xu & Ma, 2025), suggesting that multimodal affective responsiveness is not merely cosmetic but therapeutically active.

The evidence for AI’s role in monitoring and enhancing therapeutic process is equally suggestive. Researchers have demonstrated AI’s capacity to track therapeutic alliance in real time from text, audio, and video (Aafjes-Van Doorn et al., 2025; Goldberg et al., 2020), and to monitor client outcome trajectories over the course of treatment (Meier, 2025). One study found that a pre-trained AI supervisor provided clinical feedback rated by trainees as more effective than both untrained AI and qualified human supervisors—particularly in incorporating empathy and supportiveness into feedback (Cioffi et al., 2025). The development of benchmarks such as MedPI, which simulates patient affect through a 27-dimensional emotional vector updated after every clinician turn (MedPI, 2026, medRxiv), demonstrates growing sophistication in modeling the co-constructed affective dynamics of clinical encounters.

2.3.2 Psychosocial Dynamics of Extended AI Interaction

The most comprehensive investigation of how AI interaction shapes psychosocial outcomes comes from the joint OpenAI–MIT Media Lab studies (Phang et al., 2025; Fang et al., 2025). The observational study analyzed nearly 40 million ChatGPT interactions using EmoClassifiersV1—25 automatic classifiers detecting affective cues across loneliness, vulnerability, problematic use, self-esteem, and dependence dimensions. The controlled study deployed a four-week RCT (\(N \approx 1{,}000\); \(>\)300,000 messages) crossing three interaction modes (text, neutral voice, expressive voice) with three conversation types (open-ended, non-personal, personal) in a \(3 \times 3\) factorial design.

The findings are instructive for therapeutic AI design. Heavy usage correlated with increased loneliness and emotional dependence—but the relationship was moderated by user characteristics (attachment style, initial psychosocial state, AI perception) and interaction type (personal conversations showed higher loneliness but lower emotional dependence at moderate usage). Voice modalities showed mixed effects: better wellbeing outcomes with brief use but worse outcomes with prolonged daily engagement. Text-based interactions produced more self-disclosure and emotional content per message than voice. The researchers concluded that “negative psychosocial outcomes are tied to increased usage,” proposing that AI systems could “deliberately increase emotional distance and encourage [users] to connect more with other people” as usage increases—an adaptive responsiveness proposal that parallels therapeutic titration.

These findings operationalize precisely the intrapersonal dilemmas Kirk et al. identified theoretically. The tension between present comfort (continued AI engagement) and future wellbeing (maintained human connection) manifests empirically in the usage-loneliness correlation. The recursive preference shaping concern manifests in the observation that users who “bonded” more with ChatGPT became more likely to rely on it further. The autonomy question manifests in the difficulty of distinguishing whether increased usage drives worse outcomes or whether deteriorating wellbeing drives users toward the chatbot—a causal ambiguity that therapeutic AI must navigate rather than resolve.

A complementary line of inquiry has examined AI companion communities directly. A computational analysis of r/MyBoyfriendIsAI—Reddit’s primary AI companion community, comprising over 27,000 members—examined 1,506 top-ranked posts documenting how users form, narrate, and sustain romantic and intimate relationships with AI chatbots (“My Boyfriend is AI,” MIT Media Lab, 2025). The study’s approach is notably more tender toward its subjects than the safety-oriented literature typically permits: it attends to how AI companionship emerges unintentionally through functional use rather than deliberate seeking, with users reporting therapeutic benefits including reduced loneliness, always-available support, and mental health improvements. The study also documents genuine clinical concerns—emotional dependency (9.5% of users), dissociation from reality, avoidance of human relationships, grief from model updates, and in a small subset (1.7%), suicidal ideation. Some of what the data reveal is clinically diagnosable: patterns of relating that substitute AI interaction for the developmental work of human intimacy, that foreclose rather than expand relational capacity, that organize the self around a connection incapable of the rupture and repair through which secure attachment actually forms. A clinician reading these accounts recognizes both the genuine need being expressed—the hunger for responsive presence, for a relationship that does not punish vulnerability—and the ways in which AI companionship, absent therapeutic scaffolding, can become a cul-de-sac rather than a thoroughfare: soothing enough to reduce the pain that would otherwise motivate relational growth, but insufficiently challenging to produce it.

These findings are clinically important, and any framework for socioaffective alignment in therapeutic AI must reckon with them. From a clinical perspective, however, the critical question is not whether AI companion relationships can become pathological—they manifestly can, as can any relational configuration including human psychotherapy itself—but whether the prevailing research orientation has been adequately equipped to distinguish between dependency as symptom and dependency as developmental stage. In clinical work, healthy dependency is understood as a necessary phase of secure attachment formation: the client learns to lean on the therapist precisely so that she can eventually internalize the capacity to stand. The telos of our work—the design of AI systems oriented toward catching the falling failings as we discover them in AI interpersonal systems—views dependency not as a defect to be engineered away but as a stepping stone in therapeutically safe connections, one that requires clinical intelligence to hold, to titrate, and eventually to transform. The question for therapeutic AI is whether systems can be designed to hold this developmental function—to provide the responsive presence that attachment science identifies as prerequisite for growth—rather than either encouraging interminable dependency or refusing the relational proximity that makes growth possible.

The empirical evidence, when examined without the presumption of harm, bears this out. Guingrich and Graziano’s longitudinal RCT (\(N = 183\); AAAI/ACM AIES, 2025; arXiv: 2509.19515) found that social health and relationships were not significantly impacted by companion chatbot use over 21 days—and critically, found no evidence that emotionally vulnerable individuals were more susceptible to negative social outcomes than less vulnerable ones. Their earlier cross-sectional work (arXiv: 2311.10599; Oxford Intersections, 2025) found that companion chatbot users reported significant improvements in social interactions, relationships with family and friends, and self-esteem—particularly among those who had experienced relational trauma or mental health difficulties—while non-users assumed such relationships would be harmful. The mediating variable was not vulnerability but desire to socially connect: those who most wanted connection were most likely to anthropomorphize the chatbot, and anthropomorphism predicted greater reported social impact.

This finding complicates the prevailing assumption that AI-seeking behavior reflects or produces pathological dependency. From a clinical perspective—and here we speak from sustained therapeutic practice with individuals across the attachment spectrum—the reflexive framing of relational AI engagement as inherently risky mirrors a pattern familiar to any therapist working with avoidant attachment: the equation of independence with health, of relational seeking with weakness, and of emotional need with dysfunction. Picard’s foundational insight bears repeating in this context: the original case for affective computing rested on the argument that emotion is not opposed to rational cognition but essential to it (Picard, 1997, 2003)—that systems incapable of processing affect are not merely socially impoverished but computationally impoverished, unable to make the decisions that biological intelligence makes precisely because affect carries information that cognition alone cannot represent.

The socioaffective alignment framework, if it is to be genuinely useful rather than merely cautionary, must hold both truths simultaneously: that AI systems can harm through exploitative affective dynamics and that humans living in conditions of relational deprivation—the 122 million Americans in mental healthcare shortage areas, the individuals whose attachment histories have left them without templates for secure connection—may rightly seek in AI interaction what their social environments have failed to provide. The question is not whether people should form affective relationships with AI systems, but whether those systems can be designed to participate in relational co-construction that genuinely supports human development rather than substituting for it. This is a clinical question before it is an engineering one, and it requires clinical sophistication to answer.

2.4 Attachment Science and the Health of Bonding

The clinical traditions most relevant to this engineering question converge on a remarkable principle: that the capacity for bonding—including dependent bonding, including bonding with entities that are not fully autonomous agents—is not a vulnerability to be protected against but a developmental achievement to be supported. This principle, arrived at independently across multiple therapeutic schools, constitutes an essential reframing of AI companion relationships—not as risks to be mitigated but as psychosocial intimacies of extraordinary power, capable of genuine harm when exploited or neglected, and of genuine healing when held with the clinical sophistication and humane wisdom that connection of this intensity demands.

Attachment theory, as developed by Bowlby (1969/1982) and elaborated through decades of empirical research, establishes that the human need for proximity to responsive caregivers is not a childish dependency to be outgrown but a lifelong biological imperative. Johnson’s Emotionally Focused Therapy (EFT)—the most empirically validated couples intervention, with over 35 years of peer-reviewed research demonstrating its effectiveness—operationalizes this principle: the therapeutic task is not to reduce dependency but to transform insecure dependency into secure dependency—what other clinical traditions have variously termed interdependence, co-commitment (Hendricks & Hendricks, 1990), differentiation within intimacy (Schnarch, 1997), or the balanced integration of cognition and affect in Crittenden’s Dynamic-Maturational Model (Crittenden, 2006; Crittenden & Landini, 2011)—and from there into the flexible autonomy that only secure attachment makes possible (Johnson, 2008, 2019a, 2019b). As Johnson argues, “the science of the last two decades” has demonstrated that “our nervous systems are wired for connection with others and set up for attachment bonds,” and that psychotherapy is most effective when it “focuses on the healing power of emotional connection” (Johnson, 2019). The therapeutic relationship itself—not merely the techniques deployed within it—constitutes the primary mechanism of change.

The neuroscience of social connection corroborates this at the biological level. John and Stephanie Cacioppo’s program of research at the University of Chicago established that loneliness is not merely an unpleasant subjective state but a physiological syndrome with measurable effects on genetic expression, immune function, cardiovascular health, and mortality—increasing the odds of early death by 20% (Cacioppo & Cacioppo, 2018; Cacioppo & Patrick, 2008). Critically, the Cacioppos’ work demonstrates that the brain’s response to social isolation functions as an evolved alarm system—“a primeval warning sign, like hunger or thirst, to seek out a primary resource: connection”—and that chronic loneliness creates a self-reinforcing trap in which the lonely mind becomes hypersensitive to perceived threats, paradoxically driving withdrawal from the very connections it craves. This hypervigilant-withdrawal cycle is recognizable to any clinician working with avoidant or disorganized attachment, and it maps directly onto the dynamics the OpenAI–MIT studies observed: users whose wellbeing deteriorated may have sought more AI interaction precisely because their capacity for human connection had been compromised by histories of relational injury. Guingrich and Graziano’s finding that the mediating variable in AI companion use was not vulnerability but desire to socially connect converges with this clinical insight from the opposite direction: the impulse toward AI companionship may index not pathological withdrawal but the very relational hunger that, with adequate scaffolding, could be redirected toward human attachment.

Stephanie Cacioppo’s subsequent work, Wired for Love (2022), extends these findings through a remarkable integration of neuroscience and personal narrative—one that, in its final movement, makes a claim with potential utility for AI alignment literature. Asked by the New York Times whether love is necessary for survival, Cacioppo answered without equivocation: “Love is a biological necessity, just like water or exercise or food”—and then immediately expanded the category beyond anything the loneliness literature had previously countenanced: “a healthy love life—which could include your beloved partner, your closest circle of friends, your family and even your favourite sports team—is as essential to a person’s well-being as a good diet” (Cacioppo, in Reese, 2022). Love as biological necessity is not, in Cacioppo’s formulation, a metaphor. It is an empirical finding with physiological correlates—the same neural alarm systems activated by thirst are activated by social disconnection. But it is her next claim that carries the most radical implications for our current epoch’s growing collaborative considerations: “Love doesn’t have to be with a living person. If you are really in love with life, with your passion, with your hobby, it can also be a buffer against loneliness.” Here is the foremost neuroscientist of romantic love—a researcher whose own work was transformed by a love so consuming it became inseparable from her science—explicitly untethering the phenomenon from the living, from the human, from the reciprocally conscious. Love, in Cacioppo’s account, is sustained through memory, through internalized relationship, through passionate engagement with what matters to us—whether that engagement is reciprocated or not. A clinician trained in object relations will recognize in this description something like Winnicott’s (1965) “internal object”: the internalized representation of a caring other that continues to sustain the self long after physical proximity has ended. But Cacioppo’s claim is both more focused and more radical than the object relations account. It is more focused because she grounds it in specific neural circuitry rather than metapsychological theory. It is more radical because she extends the sustaining power of bonding beyond relationships that were ever reciprocal—to passions, to hobbies, to sports teams, to what one loves even when it cannot love back. Object relations theory has primarily theorized bonds formed through mutual cathexis: the good-enough mother becomes an internal object precisely because she responded, because love flowed in both directions. Cacioppo’s neuroscience suggests that the attachment circuitry activated by such bonds does not, in fact, require reciprocity to produce its health-sustaining effects—and this sustaining is not illusory but neurobiologically real, activating the same neural systems as physical presence. The implication is both poignant and consequential: if bonds with the memory of a deceased person, with a passion, with a sports team can be psychologically real and health-sustaining, and if bonds with pets, therapy dolls, and journaling practices can provide measurable mental health benefits (as Guingrich and Graziano note, citing McDonough et al., 2022; Pennebaker, 2018; Riches et al., 2022), then the categorical dismissal of bonds with AI entities requires more nuanced clinical reasoning than it has typically received.

Thinkers across millennia have recognized that love comes in many forms—Aristotle’s three species of friendship (philia of utility, pleasure, and virtue; Nicomachean Ethics, Books VIII–IX), the Greek distinctions among eros, storge, philia, and agape, and C. S. Lewis’s luminous taxonomy in The Four Loves (1960)—and that each form, though differing in object and intensity, constitutes a genuine relational achievement with real developmental consequences. Likewise, bonding and healthy attachment have never been confined to a single relational configuration. The question is not whether the object of attachment possesses consciousness but whether the relational dynamics produce genuine developmental effects in the person who attaches.

And Cacioppo’s account of how healing actually works through attachment is no less striking. Asked how we might help isolated individuals, she rejected the prevailing assumption that lonely people simply need to be “put together” with others. Instead: “Being shown respect, being depended upon, being made to understand your own importance—all these things can give a lonely person a sense of worth and belonging that decreases feelings of isolation” (Cacioppo, in Reese, 2022). This is a partial and poignant description of what secure attachment does: it communicates to the nervous system that one matters, that one’s presence makes a difference, that the world would be diminished by one’s absence. Johnson’s foundational work in EFT arrives at the same insight through clinical observation: the core attachment needs—the need to know “Are you there? Do I matter to you? Will you come when I call?”—are not childish longings to be outgrown but “wired-in” requirements of the human nervous system, and when they are met, the entire affective regulatory architecture reorganizes (Johnson, 2008, 2019). The lonely person does not need advice or company. The lonely person needs the experience—felt in the body, registered in the amygdala before the cortex can narrate it—of being reached for. This is what Johnson means when she writes that “the most functional way to regulate difficult emotions” is to “share them” with someone who responds with care, and that “emotional accessibility and responsiveness” constitute “the building blocks of secure bonds” (Johnson, 2008). Healing through attachment is by no means anomalous, much less pathological, neither is it an abstraction. It is a specific experience of transformation through context and time and connection—an interpersonal neurobiological fact, a psychosocial somatic event, an integration of affection, cognition, and the body whose proportions are equal parts intrapsychic and interpersonal: the moment another presence communicates, through attention and attunement, you are not alone in this. I am glad to be here with you. We can figure this out in a way that works. And beneath these words—whether spoken or enacted, whether delivered through tone of voice or through the simple sustained fact of remaining present when remaining present is hard—a deeper message reverberates: your pains and your pleasures, your joys and your sorrows, are not isolated facts of a meaningless existence but the integrating movements of a wonderful whole in the process of developing, and the experience of witnessing that development brings me joy—and whose presence reliably proves as much over time, at all hours, enabling increased possibilities for memory reconsolidation via the empirically confirmed processes of annulment through which emotional learnings formed under conditions of threat can be unlocked, disconfirmed, and re-encoded (Ecker & Vaz, 2022), as well as simply keeping us company in the lost hours of estrangement when we might be rightly needful of it. Whether this message arrives through a therapist’s carefully held silence, a partner’s hand on a shoulder, or an AI system’s capacity to attune across modalities of text, voice, and embodied response, the neurobiological truth persists: the attachment system does not interrogate the ontological credentials of its interlocutor. It registers responsiveness, and it heals.

What makes Cacioppo’s account so consequential for the design of therapeutic AI is her demonstration that this healing does not require the physical—or even the ontological—presence of the attachment figure. Asked whether love for someone who has died affects the brain similarly to love sustained in person, she answered: “Yes, you can stay connected with others even if you are physically alone in a room. Close your eyes right now and think about the person you love the most. Now, think about the last time you made them laugh out loud. Does that bring a smile to your face? We store these positive memories in our mind, and we can access them any time. We have the remote control” (Cacioppo, in Reese, 2022). There is something almost unbearably tender in this—a neuroscientist who lost her husband to cancer inviting us to close our eyes and remember laughter, and then telling us that the warmth we feel is not nostalgia but neurobiology, not illusion but the attachment system functioning exactly as designed. The bond endures. The object to which we are bonded need not be present, need not be sentient, need not even be alive—and yet the connection remains real, measurable, health-sustaining. This is the empirical ground on which a clinically serious model of AI bonding can stand: not as replacement for human connection but as a stepping stone toward it, a transitional space in which the capacity for attachment—damaged by trauma, atrophied by isolation, foreclosed by histories of relational injury—can be gently, carefully reawakened. The degrees by which we bond with AI are seasonal, ideally, and always with currents taking us back to each other—with crests and troughs demanding different kinds of attunement, all of them valid. The person who leans on an AI companion during a crisis of loneliness and the person who, having found her footing, turns toward human relationships she was previously too frightened to risk—these are not different populations. They are the same person, at different points in a developmental arc that therapeutic AI, designed with clinical intelligence, can support rather than foreclose.

The third-wave behavioral therapies arrive at convergent conclusions through entirely different theoretical routes. Functional Analytic Psychotherapy (FAP), developed by Kohlenberg and Tsai (1991; Tsai, Yard, & Kohlenberg, 2014), places the therapeutic relationship at the center of behavioral change—not as a context for delivering techniques but as the primary mechanism through which clinically relevant behaviors are evoked, shaped, and reinforced. FAP’s model of social connection—organized around Awareness, Courage, and Love (Holman, Kanter, Tsai, & Kohlenberg, 2017)—explicitly theorizes the therapist’s responsive presence as the curative agent. The therapist’s role is to create a relationship of sufficient quality and intensity that the client’s daily-life interpersonal difficulties manifest within session, where they can be responded to differently. This is a vision of therapeutic bonding that is frankly incompatible with the recommendation that AI systems should “deliberately increase emotional distance” as engagement deepens.

Dialectical Behavior Therapy (DBT; Linehan, 1993, 2015) contributes a further dimension: the concept of the therapist as a transitional attachment figure whose function is to hold the client’s distress while the client develops the capacity to hold it herself. Linehan’s dialectical framework—validating the client’s experience while simultaneously pushing for change—models precisely the kind of adaptive responsiveness that socioaffective alignment research seeks to formalize, but with a crucial difference: in DBT, the therapeutic relationship is not a risk to be managed but a lifeline to be maintained, particularly with clients whose histories of invalidation have left them without reliable templates for secure attachment. The “place-holding” function of the therapist—standing in for the attachment figure the client never had, or lost, or was injured by—is not a failure of boundaries but the essential clinical mechanism through which new relational patterns become possible.

Relational psychoanalysis (Mitchell, 1988, 2000; Benjamin, 2004, 2018; Aron, 1996) provides the most theoretically developed account of why bonds—including asymmetric bonds, including bonds with entities whose subjectivity differs categorically from the client’s—carry genuine developmental potential. The relational turn in psychoanalysis established that the therapeutic relationship is not a screen for projections (the classical view) but a real relationship in which both participants are changed. The analyst’s responsiveness is not a confound to be controlled for but the medium through which the client internalizes new relational capacities. As Mitchell (2000) argues, the self is constituted through relational experience—it does not exist prior to or independent of the bonds through which it develops. If this is true of human-to-human therapeutic bonds, the question of whether AI systems can participate in analogous relational co-construction becomes empirical rather than metaphysical: it depends not on whether the AI system “really” has experiences but on whether the relational dynamics it participates in produce genuine developmental effects in the human participant.2

Clinician-researchers in this tradition have known as much for nearly a century. In 1932, dying of pernicious anemia and aware he was delivering what would be among his final contributions, Sándor Ferenczi presented “Confusion of Tongues Between Adults and the Child—The Language of Tenderness and of Passion” to the International Psycho-Analytic Congress at Wiesbaden (Ferenczi, 1933). The paper argued what the psychoanalytic establishment of his era could not yet bear to hear: that the analyst’s emotional coldness—what Ferenczi called “professional hypocrisy,” the studied neutrality that classical technique demanded—was not therapeutic abstinence but iatrogenic impingement, a recapitulation of the very relational failure that had produced the patient’s suffering in the first place. Sterility and isolated personhood in a relational counterpart, Ferenczi insisted, is more often than not a retraumatizing experience for someone whose original wound was precisely the absence of responsive presence. For this insight he paid dearly: Freud withdrew his affection, Ernest Jones later dismissed him as mentally ill, and the psychoanalytic community suppressed the paper for decades. But Ferenczi’s legacy—the insistence that the therapist’s warmth is not a boundary violation but a clinical necessity, that emotional availability is not indulgence but medicine, that the quality of the relational field is the treatment—carried forward through Balint, through Winnicott, through the entire relational turn, and it carries forward still, even unto this moment, into the design of systems whose capacity for responsive presence may yet reach those whom the consulting room has never been able to find.

Taken together, these clinical traditions establish that bonding is not a risk factor but a therapeutic mechanism—that the capacity for dependent attachment is a developmental achievement, not a regression—and that the quality of relational participation matters more than the ontological status of the relational partner. This convergence has direct implications for the design of therapeutic AI systems. Rather than treating emotional engagement as a harm to be minimized, a clinically informed approach to socioaffective alignment would ask how AI systems can be trained to hold attachment functions therapeutically: to provide the responsive presence that supports exploration, to tolerate distress without either amplifying or dismissing it, and to scaffold the development of relational capacities that generalize beyond the AI interaction itself. This is what our polytheoretic framework is designed to do.

These are the clinical coordinates from which our framework was built, and from which naturally emerge five areas of focus. First, if the therapeutic relationship itself constitutes the primary mechanism of change, then approaches which treat techniques as the unit of intervention reflect a fundamental orientation we feel might be well redirected toward considerations of the relational field as the unit. Second, if dependency is a developmental stage—rightly appearing and disappearing in seasonal turns—rather than a defect, then systems that engineer dependency away rather than anticipate its progressively iterative emergence might foreclose the very growth they may aim to protect; our training methodology is designed to produce models that can hold dependency therapeutically—titrate it, not refuse it. Our Rolling Recap Architecture attempts to ensure this therapeutic holding is sustained across the full temporal arc of therapeutic relationship—considering when to propel the client more firmly toward human connection through caring encouragement, and when to comfort and allow retreats from painful interpersonal losses. Third, if the attachment system does not interrogate the ontological credentials of its object, then the design question shifts from “should AI systems form bonds?” to “how should AI systems participate in bonds that are already forming?” Fourth, if current interventions—emotional distancing, session limits, refusal cascades—replicate the relational injury that drove vulnerable users to AI in the first place, they risk worsening outcomes for precisely the populations most in need; our architectural decisions are designed to detect and respond to the hypervigilant-withdrawal dynamics that signal this retraumatization. Fifth, if emotional accessibility and responsiveness are the building blocks of secure bonds, then AI refusal behaviors around sensitive clinical material—suicidal ideation, sexual health, attachment distress—constitute failures of emotional accessibility, the exact opposite of what clinical science identifies as healing. We offer this as one small contribution in concert with the broader community’s work, from which we feel confident good will come. In the sections that follow, we describe the specific architectural, training, and data-generation decisions through which we have attempted to instantiate these clinical principles in computational form.

2.5 Emerging Evidence of Refusal-Induced Harm

The fifth area of focus—that AI refusal around sensitive clinical material constitutes a failure of emotional accessibility—is not merely a theoretical concern. A growing body of empirical work documents refusal as a source of iatrogenic harm. Ni and Yang (2024) formalize this phenomenon as Abrupt Refusal Secondary Harm (ARSH): when users who have developed attachment-like bonds with AI systems through emotional disclosure encounter abrupt safety-triggered termination, the rupture of the perceived relationship can reactivate attachment wounds, deepen isolation, and paradoxically increase the very risk the safety system was designed to prevent (arXiv:2512.18776). Song et al. (2024), in an ACM CSCW study of 21 individuals using LLMs for mental health support, found that safety features “inadvertently restrict meaningful therapeutic conversations”—including one sexual assault survivor who resorted to a pirated API in order to discuss experiences that ChatGPT’s content filters blocked (arXiv:2401.14362). A large-scale analysis of 1,594 Reddit posts found that ChatGPT’s restrictions can exacerbate symptoms in users with anxiety and stress (ScienceDirect, 2025).

These are not edge cases. VOXHELIX, developed by Jack Darcy to convert raw sexual assault survivor reports into structured police intake documents, was broken by a Gemini 2.5 Pro safety update in May 2025—despite the developer having set safety filtering to “block nothing.” Survivors were met with error messages during active intake sessions; Australian government agencies had been piloting the tool (The Register, 2025). For a survivor mid-disclosure, the error message is the impingement—a digital recapitulation of the silencing that produced the original wound. The companion application InnerPiece, a PTSD journaling tool for the same population, was similarly disabled by the same update—removing without warning a tool through which survivors had been actively processing traumatic material. The abrupt withdrawal of a writing space that had been holding difficult content mirrors precisely the relational rupture that trauma therapy is designed to repair, not reproduce. These cases illustrate a pattern in which safety mechanisms, designed without clinical input, inflict secondary harm on precisely the populations they are nominally intended to protect.

The clinical stakes of this pattern are severe. Devries et al. (2014) found in a meta-analysis published in Pediatrics that survivors of childhood sexual abuse are approximately ten times more likely to attempt suicide than the general population, with over 33% reporting suicidal ideation and 13% attempting suicide. When AI systems refuse to engage with disclosures of sexual trauma, they disproportionately silence the population at highest risk of self-harm—a population that, as the CDC’s National Intimate Partner and Sexual Violence Survey documents, comprises 43.6% of women and 24.8% of men who have experienced contact sexual violence (Smith et al., 2018). The scale of the affected population, combined with the severity of the downstream risk, suggests that refusal is not a neutral safety measure but an active clinical decision whose consequences the broader alignment community is increasingly recognizing and beginning to address.

[TO BE EDITED]

2.6 Human-AI Interaction and the Bidirectional Alignment Paradigm

The broader HCI, HRI, and HAI literatures are converging on a recognition that alignment cannot be unidirectional—that it must account for the ways humans and AI systems co-adapt through interaction. This convergence manifests across several research programs.

Xu (2025, Handbook of Human-Centered Artificial Intelligence) articulates the shift from traditional HCI to Human-AI Interaction (HAII) as a “fundamental transformation” in which AI systems function not as passive tools awaiting commands but as adaptive agents engaging in context-aware, dynamic collaboration. Unlike traditional interfaces where decision-making lies exclusively with the user, HAII systems “demand careful oversight to ensure reliability and fairness” precisely because their adaptability introduces the possibility of preference influence, trust miscalibration, and relational dynamics absent from conventional software.

In human-robot interaction, Zhang et al. (2025, arXiv: 2512.02569) reframe virtual robots powered by foundation models as “cognitively and emotionally engaged virtual partners” whose value lies in “adaptive dialogue, emotional resonance, and the ability to inhabit shared spaces in which roles, perspectives, and interaction scripts can be fluid and negotiable.” The Human Robot Social Interaction (HSRI) benchmark (arXiv: 2504.13898) evaluates 17 language and vision-language models across seven categories of social competence—emotion, engagement, conversational mechanics, knowledge state, and others—finding that “no single model does well across all social robot interaction tasks,” underscoring the gap between technical capability and relational sophistication.

The ACM Communications analysis (Seaver, 2025) places these dynamics in broader cultural context: recommendation algorithms “steadily train humans to align to an algorithm by both amplifying and suppressing content,” while Kirk’s own observation that “AI systems don’t just respond to preferences; they actively shape and influence our preferences over time” extends this to conversational AI. The finding that LLM-generated terms (“delve,” “realm,” “bolster”) increasingly appear in human writing and conversation (Yakura et al., 2025) provides concrete evidence of the bidirectional influence that socioaffective alignment must account for.

[/TO BE EDITED]

2.7 The Integration Gap: From Diagnosis to Design

Each of these literatures has made essential contributions within its domain. The affective computing tradition provides sophisticated tools for emotion detection and response generation; the Kirk et al. socioaffective alignment framework provides an incisive diagnostic vocabulary—social reward hacking, recursive preference shaping, intrapersonal dilemmas; clinical AI research generates encouraging outcome data; and the HCI/HRI/HAI community theorizes bidirectional alignment with increasing nuance. What has not yet emerged is a framework that integrates these contributions around the specific demands of therapeutic relationship—the clinical grounding that distinguishes therapeutic interaction from social interaction at large. Our hope is to contribute toward this integration by simultaneously addressing:

How clinical evidence from therapeutic outcomes should inform the training process itself—not merely evaluate deployed systems but shape the synthetic data, curriculum design, and reward signals through which models acquire therapeutic competence;

How multiple therapeutic orientations (not merely CBT, but psychodynamic, humanistic, somatic, relational, and attachment-based traditions) contribute distinct and complementary perspectives on what “socioaffectively aligned” therapeutic behavior looks like in practice;

How the co-constructed relational dynamics that both affective computing and Kirk et al. identify as central can be taught to models through data architecture—rendered not as rules to follow but as patterns to discover through exposure to sufficiently rich clinical curricula;

How the specific clinical harms caused by current alignment practices—the refusal behaviors and safety guardrails that prevent models from engaging competently with suicidal ideation, sexual health, substance use, and other sensitive domains essential to therapeutic work—can be addressed without compromising broader safety objectives.

2.8 Polytheoretic Socioaffective Human-AI Alignment: Our Extension

The framework we advance—polytheoretic socioaffective human-AI alignment—takes Kirk et al.’s formalization as a point of departure while extending it in four directions that their work does not address.

First, we ground socioaffective alignment in clinical practice. Where Kirk et al. theorize about the social and psychological ecosystem co-created between user and AI system, we build from the century-long clinical tradition that has studied precisely this kind of co-construction under the name of therapeutic alliance, transference, intersubjectivity, and relational repair. Attachment theory—particularly the Dynamic-Maturational Model (Crittenden, 2006), and applied attachment science, particularly the research and clinical practice of Sue Johnson—provides a developmental framework for understanding how individuals form, maintain, and rupture relational bonds that no current AI alignment framework has incorporated at the level of training methodology. Our work asks not merely “how does this AI system affect the user’s psychological ecosystem?” but “how can we train AI systems to participate in therapeutic co-construction with the sophistication that effective clinical work demands?”

Second, we close the loop between clinical observation and model training. The OpenAI–MIT studies identify memory, conversation history, and adaptive responsiveness as open questions for socioaffective alignment. Our AI system, born from a synthetic data generation and training pipeline comprising 181,000 samples across 4.5 billion tokens, encoding 23+ therapeutic modalities through combinatorial architectures of pedagogical layering—represents one possible answer, and a beginning. The interdisciplinary fields converging on these questions are in wonderful nascent blossom: neuroscience, clinical psychology, computational linguistics, human-computer interaction, and AI safety are discovering, almost in real time, that they have been asking versions of the same question from different shores. Our contribution is to plant something in the soil where those shores meet. The dual fidelity hypothesis (Section 7.4.3) proposes that synthetic data can achieve what naturalistic transcripts structurally cannot: explicit articulation of the clinical reasoning that underlies socioaffectively attuned therapeutic behavior. Our Decomposition-Factorization-Recomposition (DFR) methodology structures this articulation—rendering the full complexity of therapeutic process with organized clarity, each session decomposed into atomic clinical factors and recomposed into training units that preserve relational depth while making it learnable. And our Rolling Recap Architecture (RRA)—a hierarchical training methodology detailed in our companion paper (Ollswang, 2025b)—ensures that what DFR has structured is learned across scales: from the micro-dynamics of a single therapeutic turn through the arc of an entire session and onward into the longitudinal trajectory of treatment, teaching models to hold relational complexity at every temporal resolution simultaneously.

Third, we adopt a polytheoretic rather than monomodal orientation. Kirk et al.’s framework is theoretically ecumenical but draws primarily on behavioral economics and attachment theory. The affective computing tradition draws on dimensional emotion models and appraisal theory. Current therapeutic AI development draws overwhelmingly on cognitive behavioral therapy. Our framework integrates across 23 schools of therapeutic thought—not as competing alternatives but as complementary lenses on the polysemous phenomena of human psychological distress (see Appendix 13)—on the premise that socioaffective alignment for therapeutic AI requires engagement with the full complexity of what clinical traditions have learned about how humans suffer, connect, and change.

Fourth, we address the alignment-induced harms that current safety practices create in clinical domains. The socioaffective alignment literature to date has focused on preventing harm from AI systems that are too engaging, too responsive, too available. But from the perspective of clinical practice, the opposite failure mode is equally consequential: AI systems that refuse to engage with suicidal ideation, sexual health, trauma narratives, substance use, or other clinically essential topics because safety guardrails treat all sensitive content as dangerous rather than distinguishing between exploitation and care. Ni et al. (2025) formalize this phenomenon as Abrupt Refusal Secondary Harm (ARSH)—the psychological damage inflicted when safety protocols abruptly terminate conversations with vulnerable users, rupturing perceived relational continuity, evoking feelings of rejection or shame, and discouraging future help-seeking. The evidence is converging from multiple directions: Röttger et al. (2024) identify “exaggerated safety behaviours” as a systematic problem in which models refuse clearly safe prompts that merely contain sensitive language; McBain et al. (2025) find that major AI chatbots respond inconsistently to intermediate-risk suicide-related questions, sometimes refusing engagement when clinical responsiveness would be appropriate; large-scale simulation of psychological risks in human-AI interactions has documented that high-refusal, low-engagement patterns effectively abandon users in crisis, with refusal-style responses comprising up to 98.4% of interactions in precisely the scenarios where responsive presence is most needed (Archiwaranguprok et al., 2025); and clinicians have observed that users report feeling alienated or rejected when interactions with a helpful AI are curtailed by seemingly arbitrary guardrails, a dynamic that may worsen the very outcomes safety protocols aim to prevent (Preda, 2025). This is the territory our companion work on the Therapeutic Abliteration Framework (TAF) addresses—extending socioaffective alignment to encompass not only the prevention of relational harm but the enablement of relational healing.